Summary

Strong noise on seismic data generally not only obscures the underlying signal but also is spread by subsequent linear processing into other traces. Consequently it is very important to nonlinearly remove it and if we can recover some of the signal underneath. Failing that at least we can use nearby traces to interpolate the signal. We present a nonlinear module THOR for removing and replacing this strong noise in seismic data. It uses thresholded median replacement in the frequency domain (Bekara et al., 2007 and Elboth et al., 2010). By using short FFT windows in time we both combine the samples belonging to a single event and insure that if the amplitudes are modified the output will still be smooth in time. If the median with a threshold for replacement is used to detect and remove strong noise on CDP gathers, then stack, prestack migration, white noise suppression can be used to suppress the remaining white noise. Because this module does not depend on noise coherency, removal of offline energy, noise bursts, ground roll, and repeats of first breaks is very effective. Replacing all the data with the median does work but the result is somewhat synthetic. The module may also be used to remove multiples.

In short this methodology is robust and effective and is recommended for routine use in the processing of seismic data.

Introduction

Strong noise can overwhelm stack, prestack migration and other linear processes. The root n cancellation for Gaussian noise may just not be sufficient. To address this we created a nonlinear process named THOR (see Figure 1).

Theory and/or Method

The process must be nonlinear – the noise is such that linear processes like stack and migration are failing. We do not want to impact traces from which signal can be recovered so a threshold is needed above which the trace is recognized as badly contaminated. Data could be edited and to preserve normalization stack, migration could remember the trace weighting. However If you do this you lose any underlying signal. This process does not use that approach. Instead it progressively replaces the trace data with an estimate of the signal built both from the trace and with adjacent traces.

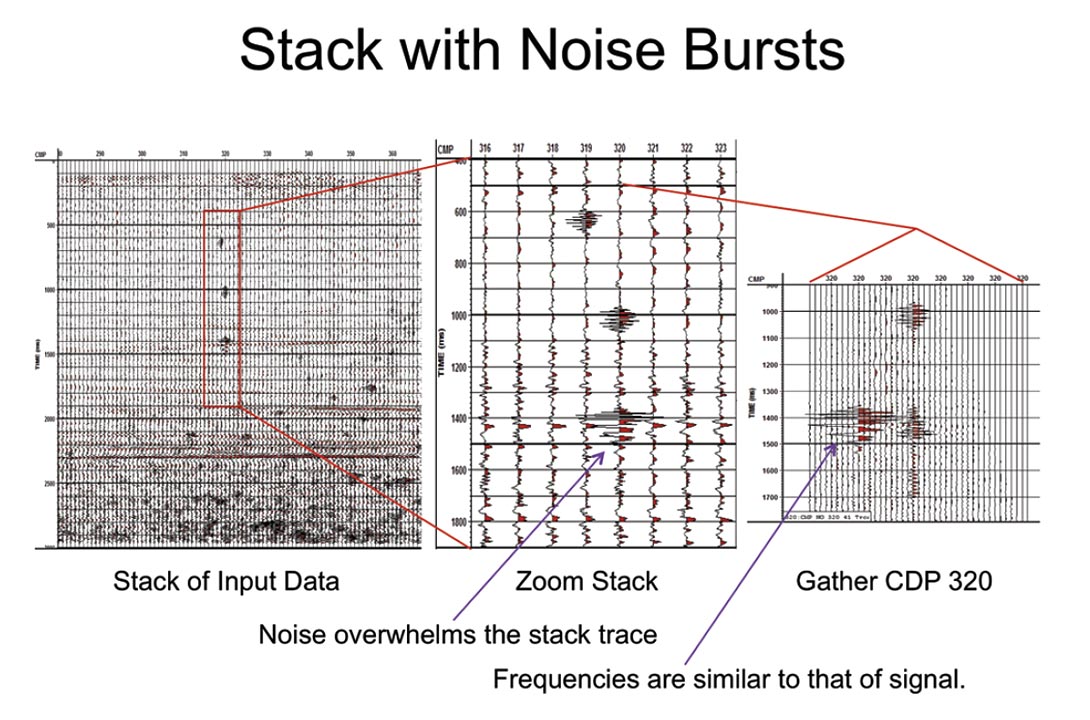

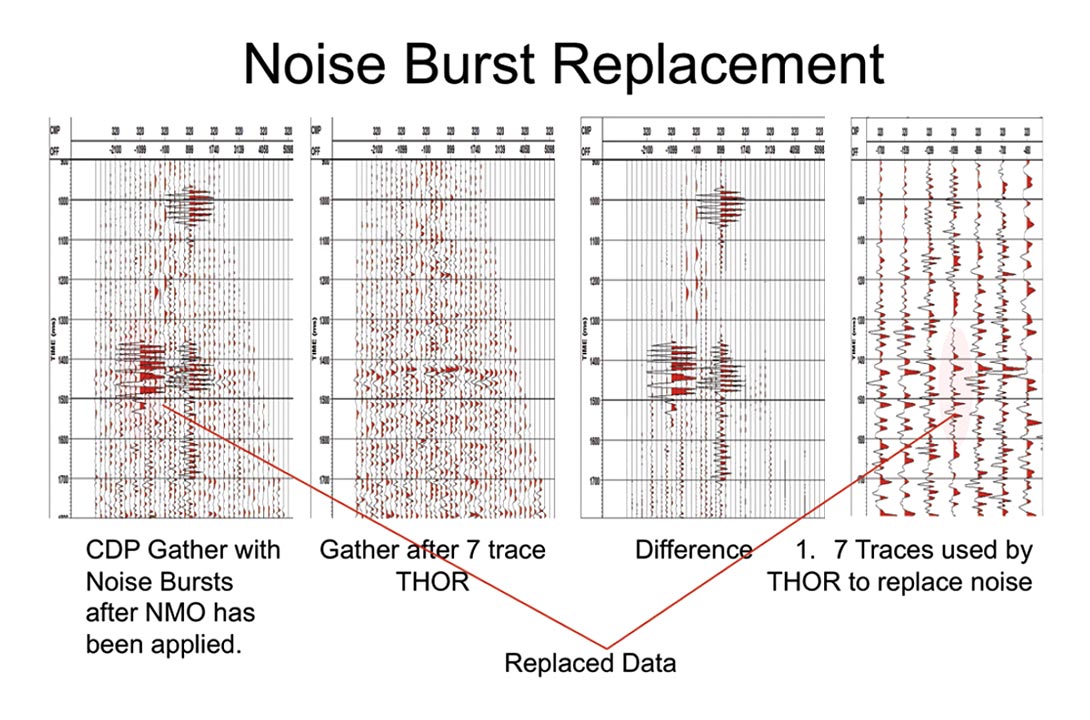

If the data has NMO applied then, if it is sorted into CDP mode, the signal will be relatively consistent across a time slice. In Figure 2, a CDP gather with noise bursts that have been replaced is shown.

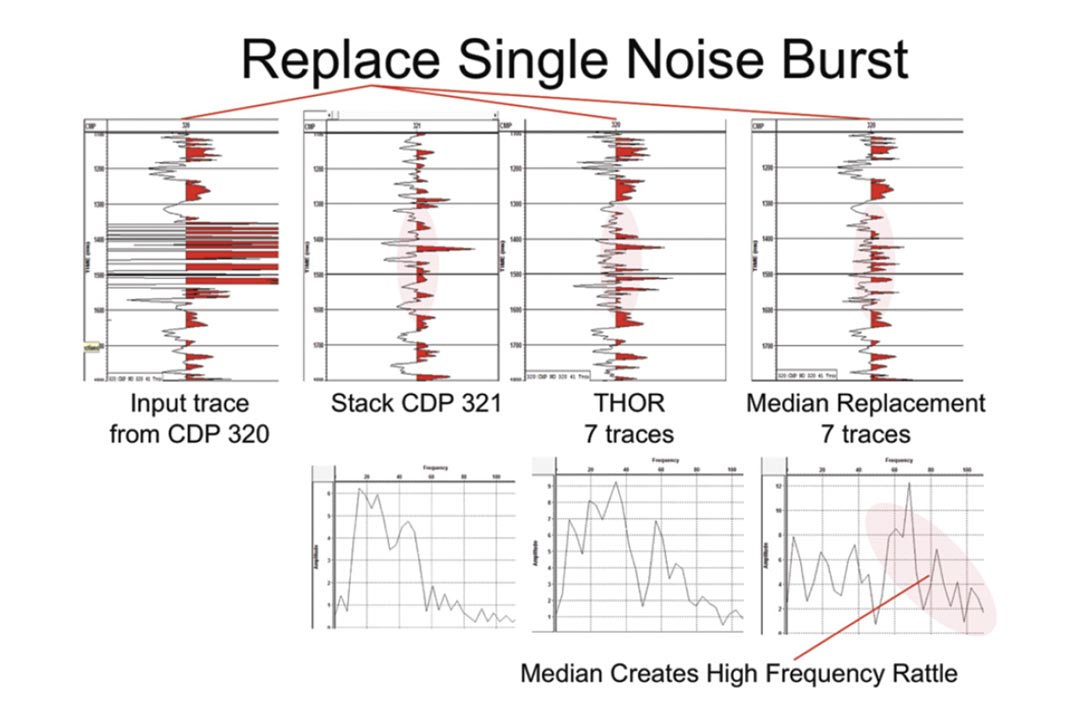

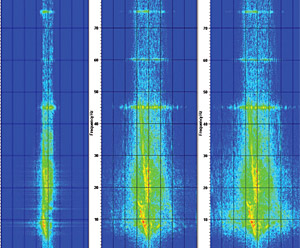

A median could be used but medians rattle about as the basic noise on the trace. Being applied successively at each sample in time will produce a high frequency rattle in time as is shown in Figure 3. Notice that the THOR replacement spectrum is much closer to that of the stack than the median. The stack differs from the THOR result mostly because THOR is looking at only 7 traces as opposed to the stack of 34 traces. Notice the difference plot. We are replacing some ground roll and high frequency noise as well as the spikes.

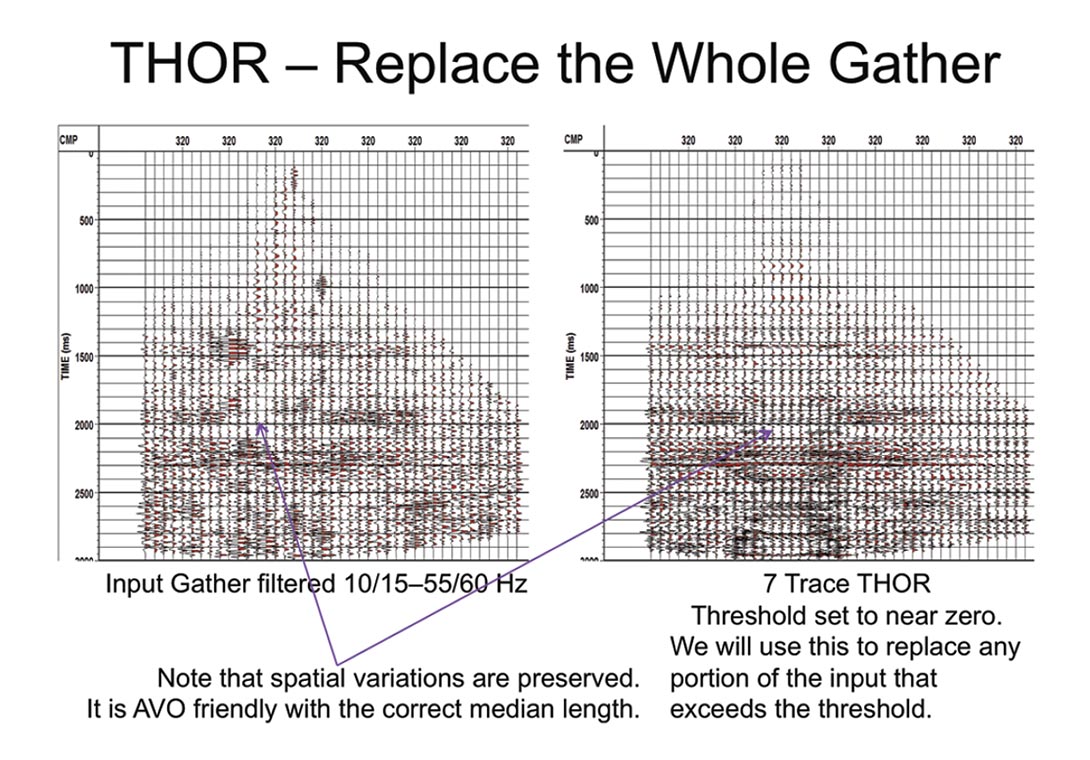

To show what the THOR process actually produces the threshold has been set to near to zero and all the data in the gather has been replaced (see Figure 4). Note that the process is following the signal character in space and hence is AVO friendly with an appropriate median length. The module automatically sorts the data in offset and if longer medians are needed it has the capability to supergather CDPs.

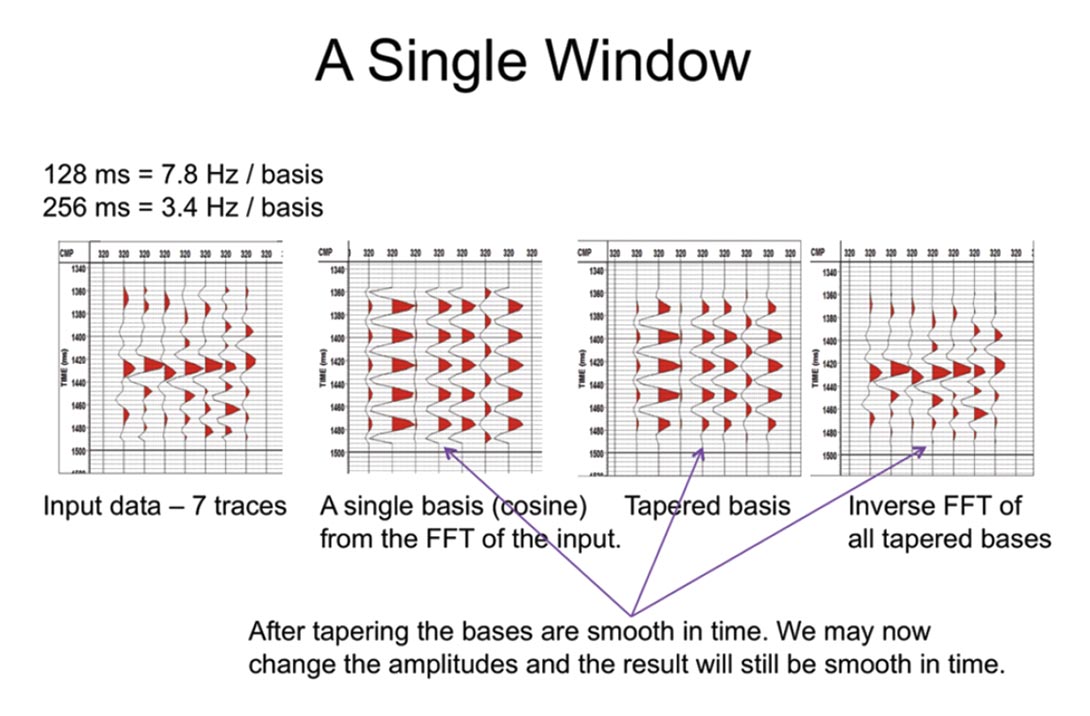

To see how this process works it must be realized that the signal is a wavelet. More than one sample in time must be considered if it is to be recognized and estimated in the presence of background noise. Now when I say wavelet I am referring to the seismic wavelet convolved with the reflectivity. If we consider too many samples the amplitude spectrum will become unstable from trace to trace. So considering that the seismic wavelet is short and considering that the noise may have restricted frequency content, the data is FFTed in small overlapping windows in time as is shown in Figure 5.

There must be only a small amount of data in each window to insure that the amplitudes for each basis are consistent from trace to trace. That is each basis in the fft must cover a lot of frequencies. (128 msec = 7.8Hz per basis) Otherwise the amplitudes will tend to fluctuate randomly from trace to trace. That is a peak that is primarily say 30Hz could be described in the next trace by say 32Hz if the window is long enough to create 2Hz per basis. A median can now be applied to these amplitudes separately and the rattle that appeared formerly in time has now been transferred into frequency. Provided that we taper the FFT basis functions in time our output in the time domain is now smooth whether we use the original amplitudes or whether we replace some of them with the median amplitudes. We can use the median +- a threshold to detect when the data is noisy and progressively replace amplitudes with the median values if it is.

So we can potentially recover a lot of the signal underneath the noise. If the noise is only strong at some frequencies then other frequencies in the original trace will be left untouched. If all the traces are noisy and if the noise has random phase then again since we treat the sine and cosine terms separately we can still estimate the probable signal. However if the noise is strong and coherent then you can only increase the number of traces in the window. Otherwise it will be treated as signal and kept . As of now we have no limit on signal strength and so no way to identify this as noise.

Applications

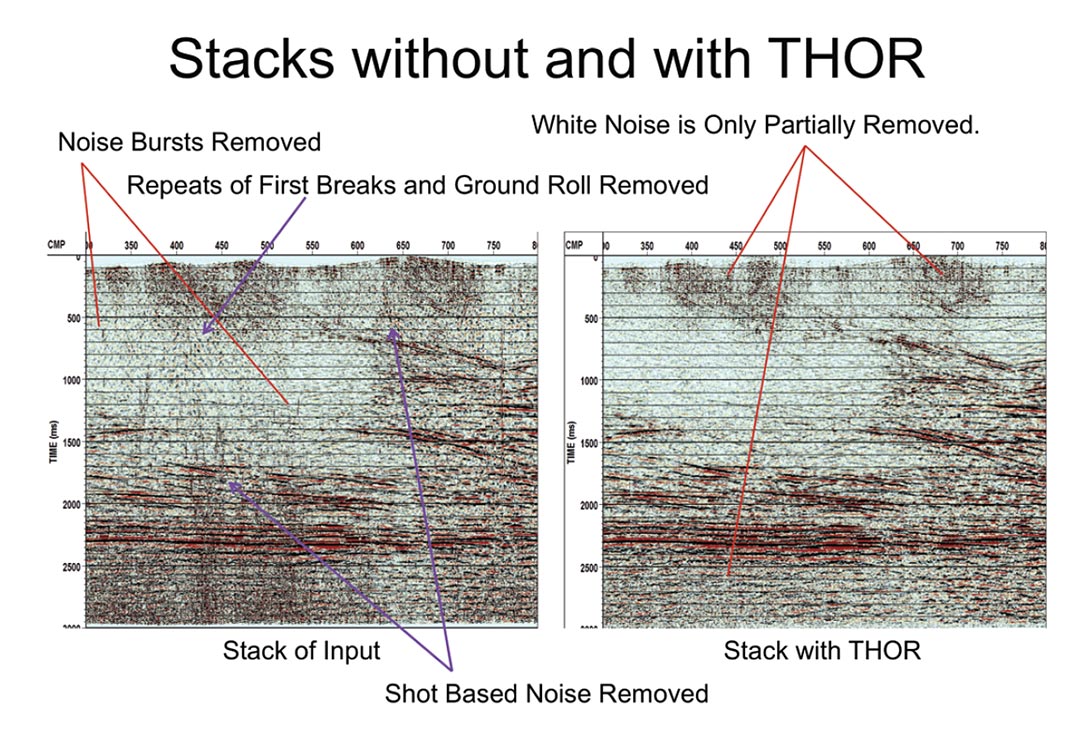

We now have a good way to run a median across our CDP gathers and replace values if the median minus the trace value exceeds a threshold. Some stacked results are shown in Figure 6.

As expected the single CDP noise bursts seen previously have been completely removed as has the shot based noise. Note that THOR is not a dip filter. It looks horizontally across CDP gathers. Successive traces in a shot gather fall into different CDPs so the noise is not coherent within any single CDP.

The ground roll and repeats of first breaks have similarly been easily removed. They are restricted in frequency so the median need only attack those frequencies and leave the others untouched. Even if this noise pervades all the shots we can often get a reasonable estimate of the signal underneath it for those frequencies. This occurs because the sine and cosine terms are considered separately. If the noise on a trace is primarily say cosine then the sine term will show the signal amplitude. Provided the noise in CDP mode is incoherent horizontally and provided enough traces are looked at the low frequency signal amplitudes can be estimated.

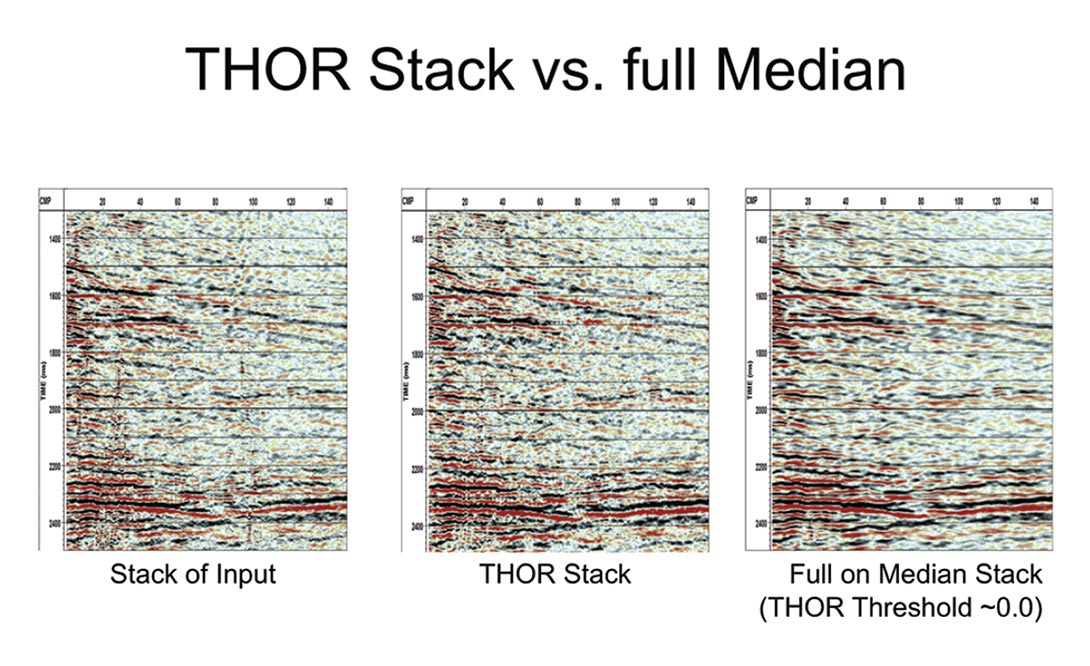

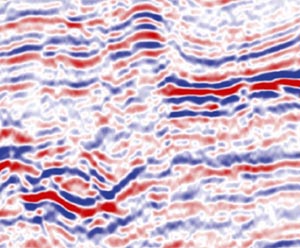

Notice also that the white noise has only been partially removed. This is deliberate. If the threshold were set low enough to remove it, then it would force the stack to a median solution. As can be seen in Figure 7 this tends to produce a blocky and synthetic looking section. So in general the median is used to remove the strong noise and the average (stack, prestack migration, white noise suppression) is used to deal with the white noise problems.

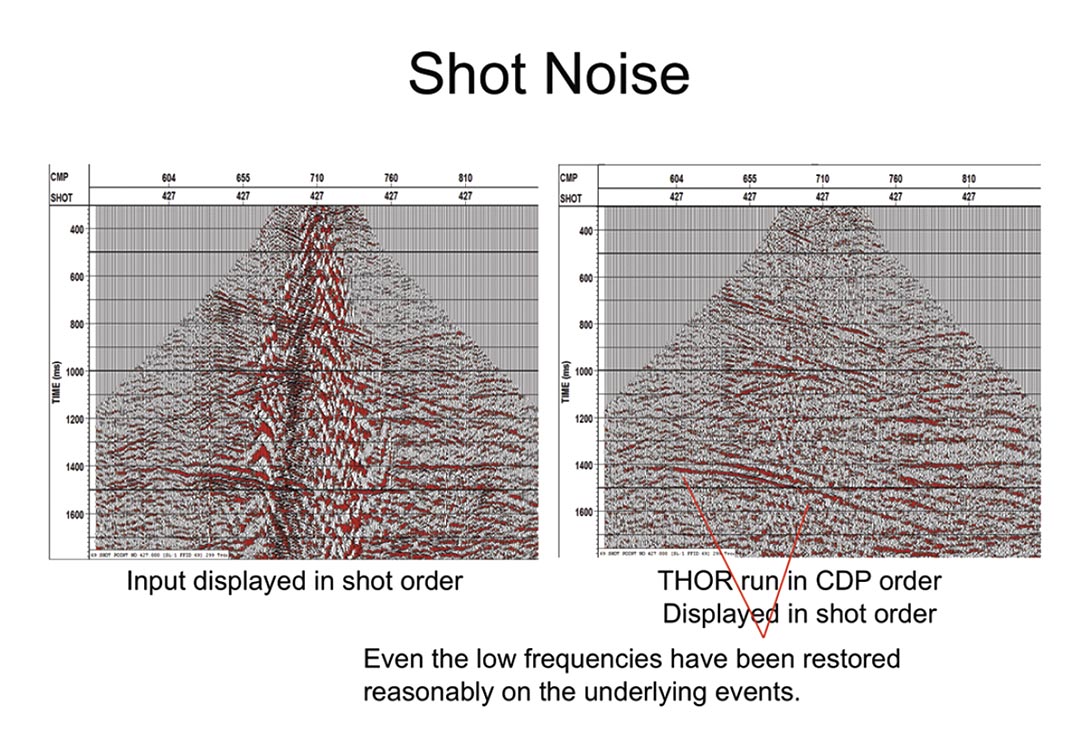

Figure 8 shows that THOR makes a sensible estimate of the signal low frequencies underneath the ground roll.

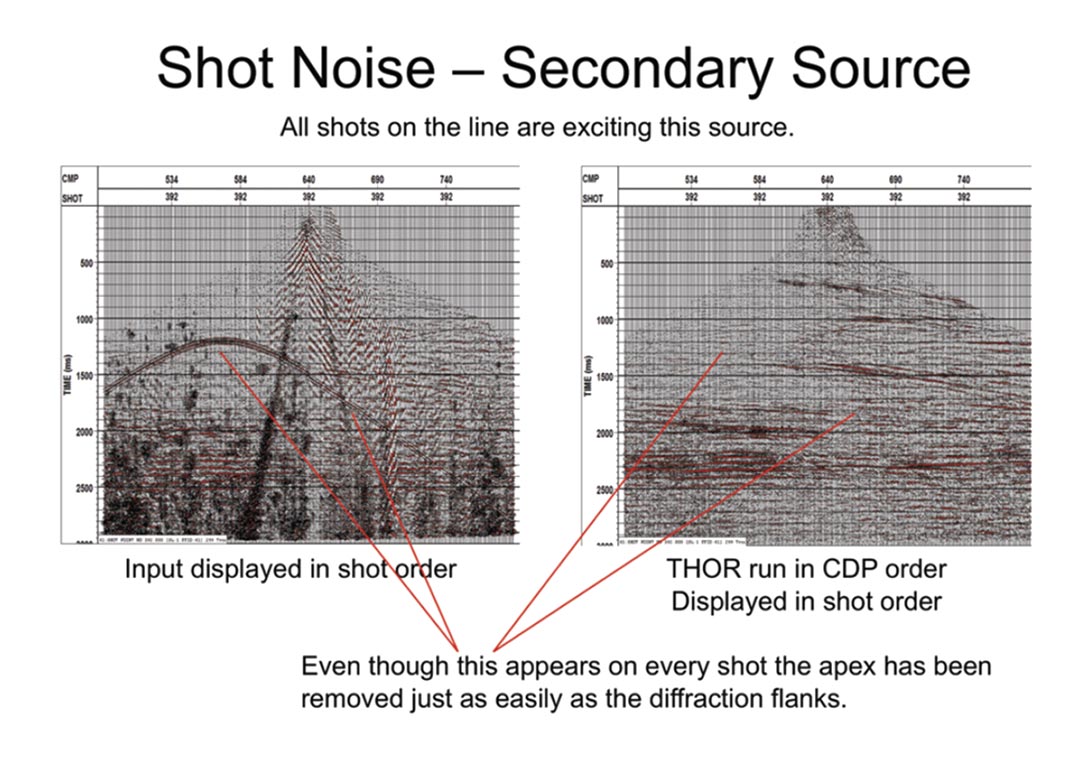

As a test in Figure 9, I have added an offline secondary source that is being excited by every shot on the line. This problem is especially bad when you have volcanic or ice overlying your section. Because the noise is not consistent across the CDP gathers the apex of the noise is being removed just as easily as the diffraction wings. This implies that this program can be used to remove multiples also. Provision has been made for this in the program but I am not showing you any data.

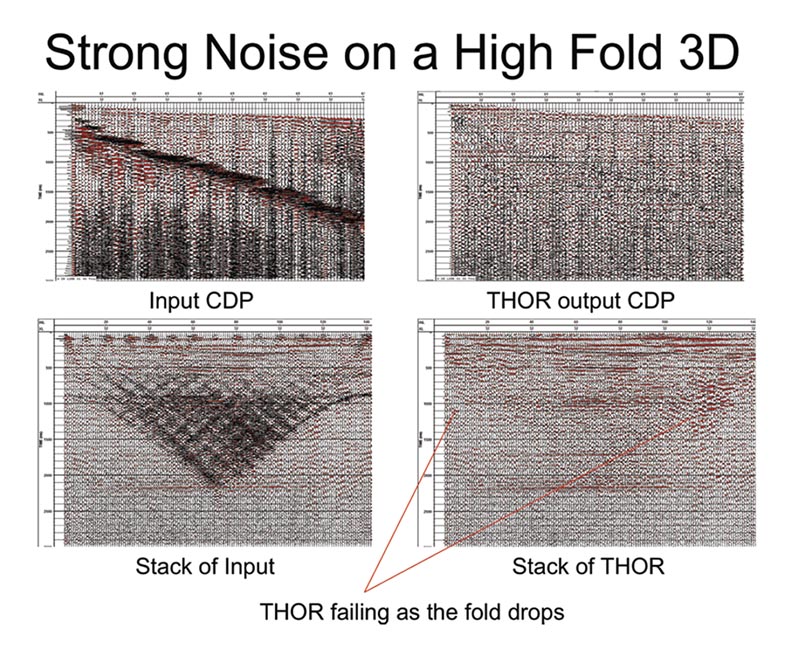

In Figure 10, the data looks very bad in a CDP gather and THOR has made a substantial difference. However for the stack, the gain is not as good as might be expected mostly because high fold does cancel noise. Low fold at the edges is also causing problems.

Conclusions

By using short FFT windows in time we both combine the samples belonging to a single event and insure that if we modify these amplitudes the output will still be smooth in time. With this decomposition we can use a median combined with a threshold to replace strong noise in CDP gathers. Because the method does not depend on noise coherency removal of offline energy, noise bursts, ground roll and repeats of first breaks is very effective. Replacing all the data with the median does work but is somewhat synthetic. This method does recover signal from the noise contaminated data.

In short THOR is a robust and effective technology and is suitable for routine processing of seismic data.

Acknowledgements

Thanks to WesternGeco for allowing me to present the details of module THOR from the seismic processing package VISTA® from GEDCO at WesternGeco.

Join the Conversation

Interested in starting, or contributing to a conversation about an article or issue of the RECORDER? Join our CSEG LinkedIn Group.

Share This Article