Abstract

The nature of technology development is to improve on current, established methods. New technology can supersede older technology, but often the older method has certain characteristics– robustness, low cost, ease-of-use, etc– that give the older method longevity in the face of its upstart rival. When dealing with seismic data for foothills exploration, three technologies developed over recent decades have reduced uncertainty in seismic imaging and interpretation, but they have not replaced their robust, less expensive, and otherwise well-entrenched older siblings. These technologies, 3D seismic data, anisotropic depth migration, and tomographic velocity analysis, have complemented rather than supplanted 2D seismic data, prestack time migration, and interpretive velocity analysis, respectively.

Introduction

As our science matures and we have decades of technology to build upon, it is important to ensure that we find that balance between continued technology development and maintaining our skills with established technology. Quoting from the SEG’s 2008 Honorary Lecture, “This is the place and time for re-examination of the basics. By building up from a firm foundation in geoscience basics, implemented using the highest technologies, we are assured of interpreting the first-order effects first” (Lindsay, 2008). Richard Lindsay refers to interpretation technology, but the same can and should be said for data-processing technology.

One of the dangers of developing new technologies is that we, as researchers, like to look to the established methods for a favourable comparison, so that we may claim that our new method is superior to the old method. We in the research community tend to focus on those characteristics of our new technologies that compare favourably to the old. After ten years of new-technology development and documenting case histories that show how anisotropic depth imaging– in its various forms– offers improved imaging accuracy, the authors of this paper intend to step back from that research and look at how different seismic-imaging technologies complement each other in the context of day-to-day seismic processing operations.

Some technologies have not superseded the technologies whose limitations they intend to overcome; rather, each simply complements its older sibling. Three examples of pairs of complementary technologies used in land seismic imaging are:

- 3D seismic data and 2D seismic data

- Anisotropic depth migration and prestack time migration

- Tomographic velocity inversion and human velocity picking

3D versus 2D

The complementary relationship between 2D and 3D results from the difference in data density. Where 2D gives us high resolution in the shallow section so that we may tie our interpretation to surface geology, 3D gives us a three-dimensional image volume of the subsurface.

For years, study after study showed the importance of 3D seismic data. For example, we needed 3D coverage to correct out-of-plane effects, to more accurately map trap closure, and to detect fault details that may show compartmentalization of the reservoir. We could go on. A real 3D survey designer could go on for pages. We even saw papers on how cost effective 3D was in obtaining full subsurface coverage. There are efficiencies to be gained by recording each shot into a full 3D patch rather than a narrow strip of receivers.

One could then make the argument not to shoot 2D seismic data at all and do all exploration in 3D. We suspect that there are geologic settings where this is, indeed, the case. In land seismic, especially in rough topographic and environmentally sensitive areas, dense 3D coverage can be very expensive even if access restrictions allow high data density. Land 3Ds tend to be wide-azimuth, with moderate fold at the target level, but with low fold and very low near-surface coverage. First-break energy is not as statistically constrained as in the single-azimuth 2D line, which results in a less resolved refraction-statics solution, and therefore, in 3D, we need to rely more heavily on reflection statics to correct near-surface weathering corrections.

We attempt to illustrate the complementary nature of 2D and 3D datasets in Figure 1. Borrowing from an illustration by 3D seismic acquisition expert, Norm Cooper (Cooper, 1997), Figure 1 shows how we might look at an image through the sparse coverage of 2D and the lower-resolution coverage of 3D. Figure 1a shows the original photo of a fish dinner. In Figure 1b, we see the image through a grid of lines to emulate 2D coverage. We see high resolution where we have coverage, but there are significant gaps in the coverage. Figure 1c shows how the areal coverage of 3D gives us a complete picture of the plate, fish, and side dishes, despite the lower resolution. In practice, most interpreters combine 2D and 3D datasets to form a more complete interpretation, which we attempt to illustrate in Figure 1d that shows an overlay of the high-resolution 2D coverage on top of the full 3D coverage. Here (Figure 1d) we see the entire low-resolution image and the 2D coverage makes it clear that the circle on top of the fish is a lime and a trained eye will determine that the side dishes are rice and plantains.

From our perspectives as seismic-imaging practitioners, when creating a depth-migration velocity model, 2D lines across a 3D survey are quite helpful for tying surface geology to the 2D seismic data and then tying the 2D seismic data to the 3D data volume. With less reflectivity in the near surface of the 3D volume and coverage gaps in the shallow section between receiver lines, tying surface geology to seismic data can be a tricky exercise. We have found that performing 2D depth migrations along with a 3D depth project helped to converge to an optimum velocity model more quickly and more accurately. On top of the higher resolution in the shallow, the 2D dataset is simply smaller, so a depth-imaging practitioner can do more depth-migration iterations in a fraction of the time, allowing the imager to experiment with model interpretations and learn more about the subsurface velocity structure, which he or she may then apply to the 3D model and further improve the 3D depth imaged volume.

The table below lists just a few examples that illustrate the complementary characteristics of 2D and 3D seismic data sets.

| 2D | 3D |

|---|---|

| higher near-surface coverage for detailed shallow section and tie to surface geology | detect lateral ramps and other features lost between 2D lines |

| refraction data highly redundant for accurate weathering correction | no worries about out-of-plane energy lost between 2D lines |

| lower data size allows for rapid turnover of depth-migration velocity models | wide-azimuthal coverage shows true 3D nature of compressional structures |

2D and 3D seismic acquisition are significantly different methods for illuminating and imaging the subsurface. With limitations in cost and surface access, each acquisition method has its own trade-offs, making each method complementary to the other. 2D seismic data is still very common in land exploration and 3D overcomes the subsurface-coverage limitations of 2D in a variety of play-development and reservoir-management applications.

Depth Versus Time

Even though one of the authors of this paper has written those three words, in that order, dozens of times, this phrase has an unfortunate implication that depth and time migrations are enemies or even competitors. When we use the words “depth” and “time” in this context, we refer to prestack anisotropic TTI depth migration and prestack time migration, respectively. Depth and time could be viewed as rivals, but, for the sake of argument, let’s consider them as complementary teammates in the quest for a subsurface interpretation. The inherent assumptions in time migration reduce the image accuracy compared to depth migration, but these assumptions simplify the process significantly, making time far more robust than depth.

Even if depth migration could replace time migration, depth imaging requires accurate time processing for its input. Statics and velocities are key parameters for an optimized seismic image– whether in time or depth. It is difficult to predict which refraction statics method will yield optimum results on any given dataset in any geologic setting. We need to test multiple tomographic and time-delay methods to see which algorithm gives the highest coherency of reflection events for each dataset. Uncorrected weathering problems will hamper all subsequent processing efforts. Velocities affect time imaging, and velocity errors can also affect the reflection-statics solution, which is part of the total statics solution applied to the seismic data input to depth migration. Depth migration is a delicate, and, in noisy data areas, velocity interpretation is a difficult process. Less than- optimal statics on the input gathers will add further instability to an already delicate process and we may not be able to converge to a velocity model that produces a reliable result.

In addition to the concerns about the quality of input data, we need a solid prestack-time-migrated image to interpret the structural horizons for the depth velocity model. Without a solid prestack time migration, we would not know where to start with our model interpretation.

Completely aside from the issues of a starting point for depth migration, prestack time migration is still an industry standard for exploration in a range of geologic settings. This robust algorithm is essential in structured land environments. Prestack time migration has had over three decades of continuous refinement (for example: Sattlegger and Stiller, 1973; Jain and Wren, 1980; Biondi and Palacharla, 1996) which produces a robustness and coherency that we enjoy today. We certainly hope that researchers continue to improve prestack Kirchhoff time migration and we are currently developing a more accurate rough topography correction for this tried-and-true algorithm. We already have promising results from this work, which we plan to publish in the coming year.

Velocity analysis in prestack-time migration may use a simple yet highly effective, time-proven scanning approach to estimate the RMS imaging velocities. At any single point in the subsurface, the processor picks an RMS velocity that gives us the clearest picture. This RMS velocity, on average, corrects for all of the velocity effects above that reflector. The robustness of the prestack-time algorithm comes from the ability of the practitioner to focus in on isolated locations in the seismic image and pick the most interpretable velocity panel at that subsurface location. In contrast to depth migration, we can happily ignore what is going on in the rest of the seismic section while we myopically focus on one specific reflector and make it image as clearly as we can.

Depth imaging, in contrast, is far more delicate. If we do not get the velocities accurate in the near-surface model, it is difficult–if at all possible, to use detailed analysis in the deeper section to correct for velocity errors in the shallow. Errors in near-surface velocities and horizon geometries conspire to degrade our depth image. Where one may generate a coherent time image by focussing only on the area of interest, in depth imaging one must consider the entire velocity structure through which the seismic energy has travelled between surface and reflector. On the positive side for depth imaging, when we have a velocity model that is able to image the target with reasonable coherency, we have come a long way to correcting for a range of wave-propagation effects resulting from lateral-velocity heterogeneity and seismic anisotropy in the layers above the target. Depth imaging is predictive and the reflectors are imaged in more accurate positions (e.g.: Vestrum et al, 1999) than with his more handsome and robust brother, the time image. The additional effort required by depth migration results in reduced exploration risk.

The table below illustrates a few of the characteristics of time and depth migrations and how these characteristics complement each other.

| time | depth |

|---|---|

| robust: nearly always get a clear, basic image | predictive: needs accurate model to image |

| velocity model independent of geology | velocity model ties with geology |

| more coherent image | more accurate position of reflectors |

| allows constant-velocity movie analysis to find reflectors hidden in the noise | corrects complex raypaths to image steep dips or fault edges that time cannot image |

Prestack time migration and prestack anisotropic depth migration are complementary technologies and we do not believe that we will see one replace the other. As these technologies evolve and we find ways to improve the accuracy or runtime of these two fundamentally different approaches to seismic imaging, we anticipate that explorationists shall still need two imaging algorithms– each emphasizing robustness or accuracy.

Automated versus manual velocity analysis

What we are talking about here is a comparison between automated reflection tomography to extract velocity information from the seismic data as compared to an experienced human practitioner using interactive diagnostics to manually pick seismic velocity functions or interpret a geologically constrained velocity model.

These two approaches to estimating seismic velocities are sometimes complementary, and more often one or the other will apply better to a particular dataset. Tomography is a hot topic in geophysical research, with multiple complete sessions at the 2008 SEG convention dedicated to tomographic velocity analysis. Unfortunately, in a foothills setting, low signal-to-noise ratios often render automated tomography methods unstable. In areas where automated tomography breaks down, we must revert to an interpretive methodology (e.g.: Murphy and Gray, 1999; Vestrum 2007) and use as many geologic constraints on the subsurface model as possible to maximize the probability of success in creating a depth image of comparable quality to the prestack time migration.

| automated | manual |

|---|---|

| velocity updates derived purely from the seismic data | velocities constrained by geologic interpretation |

| fine velocity detail and highly accurate velocity correction | stable velocity analysis in the face of low fold and/or high noise |

These two technologies are not as complementary as they are different in the geologic settings to which they apply. We mention these two because this is a good example of continued technological development along two separate, potentially competing paths. Computing technology improvements in data storage, CPU speed, and graphical visualization have all conspired to deliver significant improvements in the interpretation- driven velocity-analysis workflow.

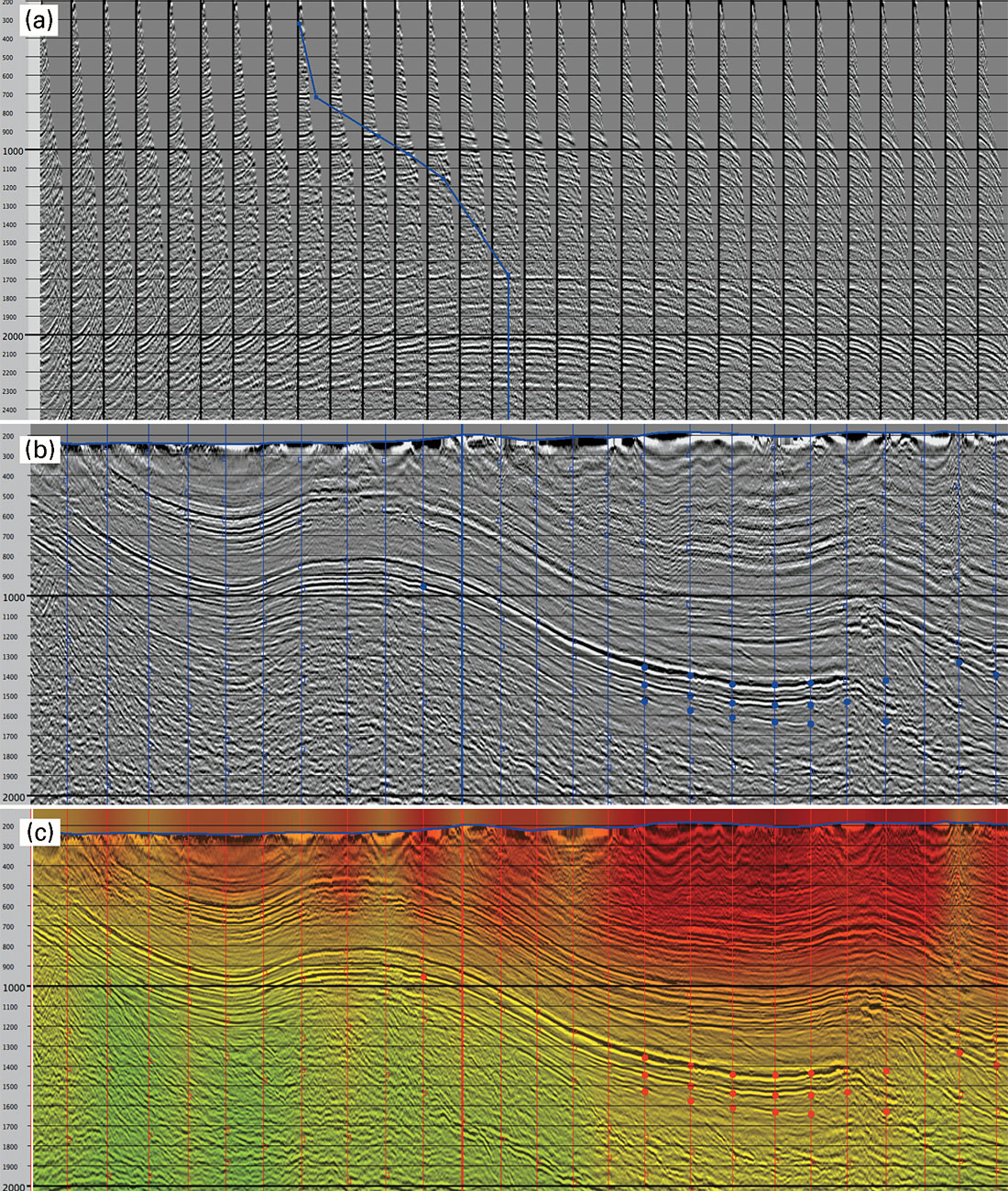

A good example from foothills data processing is velanal from Techco Geophysical. As described by Vestrum (2007) as part of an industry-standard geologically constrained time-migration workflow, Figure 2 shows screen captures for three seismic displays used in manual velocity picking.

The data for this interactive picking comes from 40 or more constant-velocity time migrations output to an analysis grid. Each constant-velocity migration generates two types of output: (1) full migrated section for each control line and (2) migrated image gathers for each control point on the control lines. These two seismic data sets are then loaded into the interactive velocity analysis tool.

The image gather window (Figure 2a), shows a single CDP image gather migrated at all 40 different prestack-time-migration velocities. When the data has relatively high signal-to-noise ratios on the prestack gathers, one may use this display to quickly refine the velocity picks at a control point. In regions of low prestack signal, the image gathers are ambiguous, or if the interpreter has concerns about the velocity sensitivity of the target reflectors, we flip to the stack-panel window (Figure 2b) where we can animate through all of the constant-velocity panels to assess reflector coherency, migration operator noise, and the sharpness of reflector terminations. This analysis requires seismic-imaging experience and should be done in collaboration with the interpreter. Throughout the velocity-picking process, a composite stack of the input velocity panels (Figure 2c), closely simulates the prestack time migration that would result from the current velocity field for further quality control.

Discussion and Conclusions

There are certainly many other newer technologies in our field that complement, rather than replace, the previous technology. An example of a comparison that highlights the trade-offs between newer and older technology is the thorough comparison between Kirchhoff and wave-equation migrations by Bale and Gray (2008).

Often when we develop a new method, we focus our efforts on overcoming the limitations of the currently established technology. The mere existence of the established technology gives us the luxury of focussing development efforts on the specific problem at hand as an augmentation or enhancement to our current technology.

Three technologies used in land seismic imaging do not supersede the technologies whose limitations they overcome. Rather, depth migration, 3D acquisition, and automated tomography simply complement their older siblings: time migration, 2D acquisition, and manual velocity picking.

Join the Conversation

Interested in starting, or contributing to a conversation about an article or issue of the RECORDER? Join our CSEG LinkedIn Group.

Share This Article